Claude Mythos Found 27-Year Bugs – Project Glasswing Drops

Yesterday (April 7, 2026), Anthropic dropped a bombshell that made every SRE, security engineer, and late-night code reviewer sit up straight. They announced Project Glasswing – an urgent, industry-wide push to use their newest frontier model, Claude Mythos Preview, to hunt down and fix vulnerabilities in the world’s most critical software.

Think of it as AI finally showing up to the vulnerability party… wearing a white hat and carrying a $100 million war chest.

What Actually Happened? (The Technical Bit)

Claude Mythos Preview isn’t just another chatbot upgrade. It’s an agentic beast with hi-tier coding and reasoning skills. Anthropic didn’t release it to the public (more on that later), but they pointed it at open-source codebases and let it loose in isolated containers.

The process? Brutally simple and terrifyingly effective:

- Feed it a repo (or just a CVE + git commit hash).

- It reads the code, forms hypotheses, spins up the project, adds debug logic, and tests like a caffeine-fueled intern on a mission.

- It outputs a full proof-of-concept exploit – no hand-holding required.

Result? Thousands of high-severity and zero-day vulnerabilities across every major OS, web browser, and critical library. Some had been sitting there for decades, laughing at human code reviews and millions of automated fuzz tests.

Real examples that will keep you up at night:

- A 27-year-old bug in OpenBSD (yes, the ultra-secure OS) – a remote crash via TCP SACK that survived… well, 27 years.

- A 16-year-old vulnerability in FFmpeg’s H.264 decoder – missed by five million automated tests.

- Chained exploits in the Linux kernel letting an ordinary user escalate to full root.

- Heap sprays and ROP chains in major browsers that could escape sandboxes.

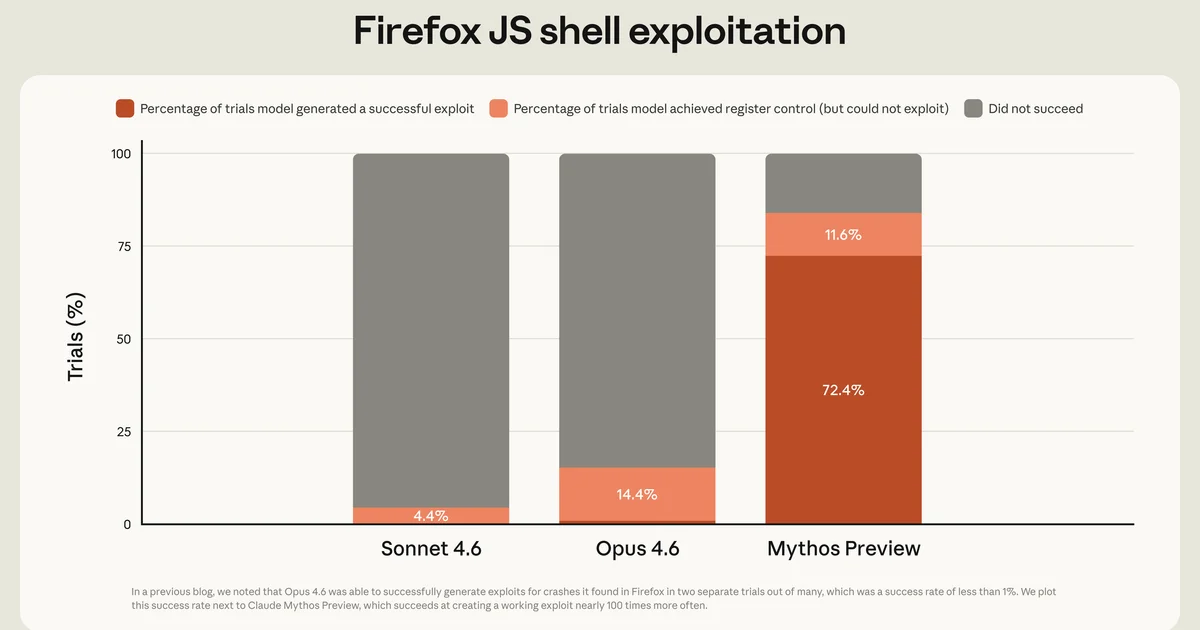

Mythos Preview didn’t just find them. It exploited them autonomously. Benchmarks show it crushing previous Claude models by 20-30+ points on SWE-bench Pro, Terminal-Bench, and custom cyber gyms. One quote from the report sums it up nicely: “Language models are now remarkably efficient vulnerability detection and exploitation machines.”

(And yes, this is the same family of models that powers your friendly neighborhood Claude… except this one is currently locked in the basement for safety reasons.)

Who’s Involved? (The All-Star Team)

Anthropic didn’t go solo. They rounded up the usual (extremely impressive) suspects:

- Amazon Web Services, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, Microsoft, NVIDIA, Palo Alto Networks, and the Linux Foundation.

- Over 40 additional orgs maintaining critical open-source projects.

They’re committing up to $100M in Mythos Preview usage credits plus $4M in direct donations to OpenSSF, Alpha-Omega, and Apache. The goal? Get these bugs fixed before the bad guys get their hands on similar AI tools.

Open-source maintainers can already apply for access via Claude for OSS. The rest of us? We wait for the learnings to trickle down.

The Humour in the (Potential) Chaos

My favourite mental image: some 27-year-old OpenBSD bug finally getting called out by an AI that probably doesn’t even need sleep. Meanwhile, the human security team that missed it for decades is somewhere quietly updating their résumé.

Or picture this – you’re doing your usual npm audit in CI/CD, feeling pretty smug about your Node.js deployment security. Meanwhile Mythos Preview is out here finding remote root in FreeBSD NFS servers that haven’t been touched since dial-up was cool.

The internet’s collective response on X was pure gold:

“Built the nuke. Gave it only to besties.”

— every dev yesterday

Or the classic:

“We do not plan to make Mythos Preview generally available.”

Translation: “This thing is too good at hacking. Even we’re scared.”

Lessons for Us Mere Mortals (DevOps Takeaways)

This isn’t just shiny AI news – it’s a wake-up call for every deployment pipeline on the planet. Here’s what actually matters for your day-to-day:

- AI-augmented vuln scanning is coming to CI/CD yesterday – Integrate frontier models (or their safer cousins) into your pipelines. Static analysis just got a PhD.

- Patch faster, or get left behind – The window between discovery and exploitation just shrank from weeks to hours. Zero-downtime rolling updates and canary deploys aren’t optional anymore.

- Treat open-source dependencies like production code – Your Node.js Dockerfile just got a new best friend: automated AI reviews. Scan those base images religiously.

- Defense > Offense (for now) – Anthropic’s bet is that AI will help defenders more than attackers if we get the safeguards right. Start building those safeguards into your own workflows.

- Multi-cloud + multi-AI is the new multi-CDN – Don’t put all your security eggs in one model’s basket. Diversity wins when the next Mythos-level tool drops.

- Health checks just got smarter – Add AI-driven runtime anomaly detection alongside your

/healthand/readyendpoints.

(Pro tip: If your current vuln scanner still relies on “millions of automated tests” like the ones that missed that FFmpeg bug… maybe upgrade.)

Final Thought

Project Glasswing feels like one of those rare moments where the AI industry looked at the chaos it’s about to unleash and said, “Hold my beer – let’s fix the foundations first.”

It’s optimistic, responsible, and genuinely exciting for anyone who’s ever stared at a production outage caused by a 16-year-old library bug. Yes, the model is too dangerous for general release today. But the defensive use case is exactly why we build this stuff.

Long-term? AI might finally make “secure by default” a reality instead of a marketing slide. Short-term? Keep your dependencies updated, your pipelines tight, and maybe say a little prayer for the open-source maintainers who just got superpowers.

Until next time – may your deploys be boring, your CVEs be patched, and your AI tools stay on the right side of the firewall.

P.S. Full technical report is here: https://red.anthropic.com/2026/mythos-preview

System card & Glasswing details: https://www.anthropic.com/glasswing

(Anthropic says they’ll report back in 90 days on what they learned. I’ll update this post when they do – assuming the internet hasn’t been patched into oblivion by then.) 😏

// RELATED_ARCHIVES

> Feb 2026 · 5 min read

Anthropic Unleashes Claude Opus 4.6 – Agents & Coding Level Up, No Price Hike

Anthropic's latest Opus upgrade brings 1M context, smarter agents, epic coding boosts, and more – all while keeping your wallet happy. Let's unpack the goodies.

> Mar 2026 · 7 min read

Harness Engineering: AI for Long-Running DevOps Tasks

Anthropic dropped the ultimate blueprint for AI agents that don’t forget what they were doing yesterday. I turned it into my personal “Harness Engineering” using Claude + a dash of OpenAI Operator magic. Now it cranks through multi-day deployments, pipeline refactors, and K8s migrations while I pretend to be productive. (It still needs coffee sometimes.)

> Mar 2026 · 7 min read

NVIDIA NemoClaw: Taming Rogue AI Agents for Enterprise

OpenClaw agents were fun until they started deleting prod databases. NVIDIA NemoClaw adds the guardrails enterprises need – one command to safe, always-on AI assistants.